There’s a familiar story in securities trading.

A team builds a model that performs beautifully in backtests. The results are clean. The signal is strong. The Sharpe ratio looks great. Everyone is confident.

Then they deploy it.

And it loses money.

Not because the math was wrong. Because the model wasn’t built for the real world- it was built for a simulation of the past.

This dynamic is well understood in quant circles - there’s a growing body of writing on why “profitable” backtests fall apart the moment they go live, once real-world constraints and execution enter the picture.

Marketing is running into the exact same problem.

When Explanation Gets Mistaken for Reality

Media Mix Models, in particular, have a way of inspiring confidence. They take messy, multi-channel data and turn it into something that feels precise: neat decompositions, directional coefficients, a clear answer to the question, “What’s working?”

And to be fair, they do work-at least in the narrow sense they were designed for. They explain historical performance. Often quite well.

But explanation isn’t the same thing as prediction. And it’s definitely not the same thing as execution.

That’s where things start to break.

The Recommendation Gap

A model suggests reallocating budget-shift spend into digital video, pull back on paid search, lean into a channel that shows strong marginal returns. The recommendation is rational. It’s supported by data. It aligns with the model’s internal logic.

So the team executes.

And the lift never shows up.

At that point, the post-mortem usually turns inward. Maybe the lag structure was off. Maybe a variable was missing. Maybe the model needs another iteration.

But those explanations assume the problem lives inside the model.

More often, it doesn’t.

The Missing Layer: How Marketing Actually Gets Executed

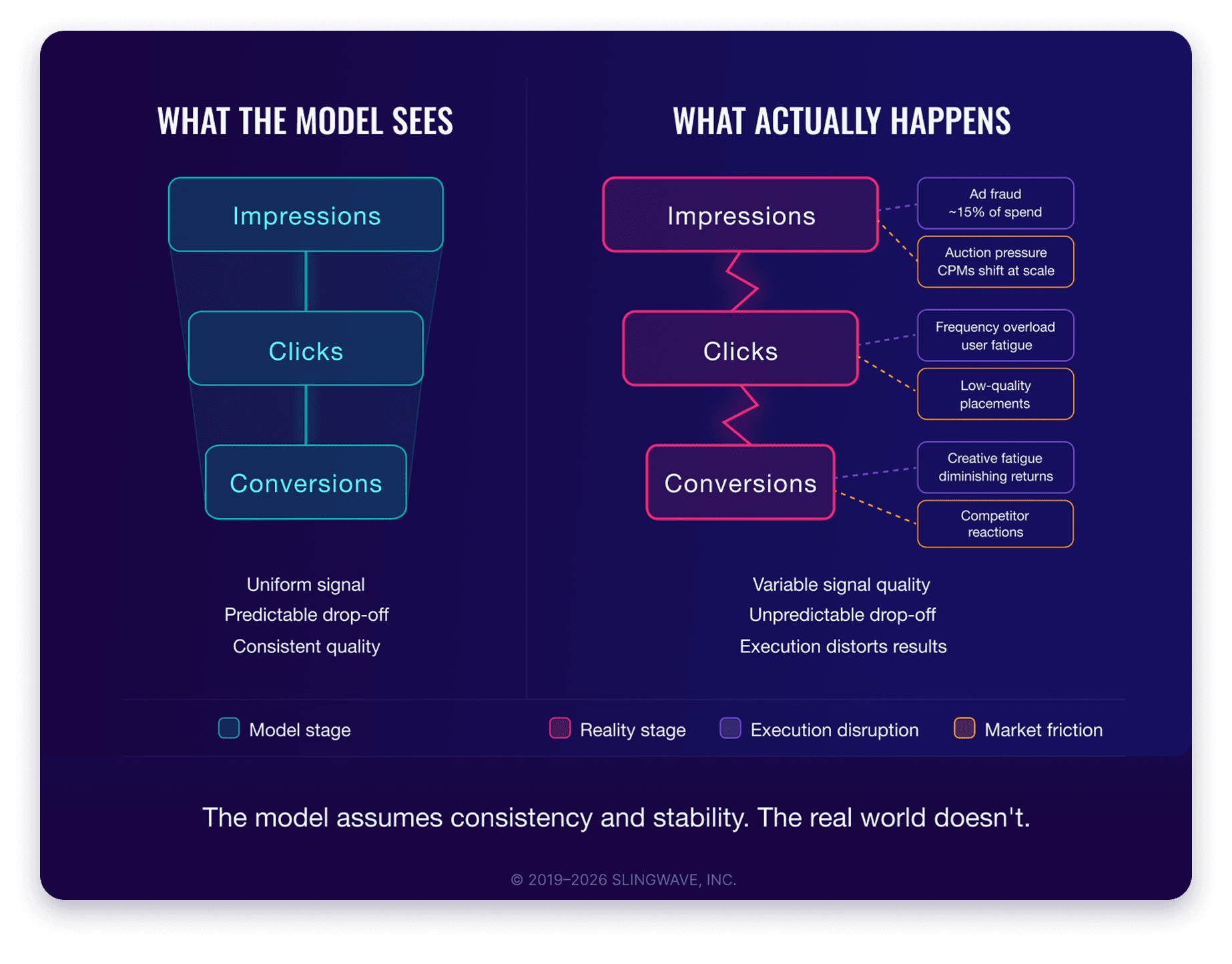

The real issue is that most marketing models ignore how marketing actually gets executed.

They assume that if you buy more media, you get more of the same media. That the impressions you win tomorrow will look like the impressions you observed yesterday. That audiences behave consistently, inventory is stable, and delivery is uniform.

None of that is true.

In practice, media buying is messy. It’s constrained. It’s adversarial.

The inventory you get when you scale spend isn’t just “more” - it’s different. Frequency changes. Placement quality shifts. Auction dynamics take over. Creative fatigue sets in. Sometimes you’re just buying the impressions no one else wanted.

The model counts an impression as an impression. The real world doesn’t.

Securities trading has a term for this: execution risk.

A strategy might identify an opportunity, but what matters is whether you can actually capture it. Prices move. Liquidity disappears. Orders get filled differently than expected. The act of participating in the market changes the outcome.

In other words, execution isn’t a detail-it’s part of the system.

Marketing models tend to treat it as an afterthought.

The Myth of a Static Market

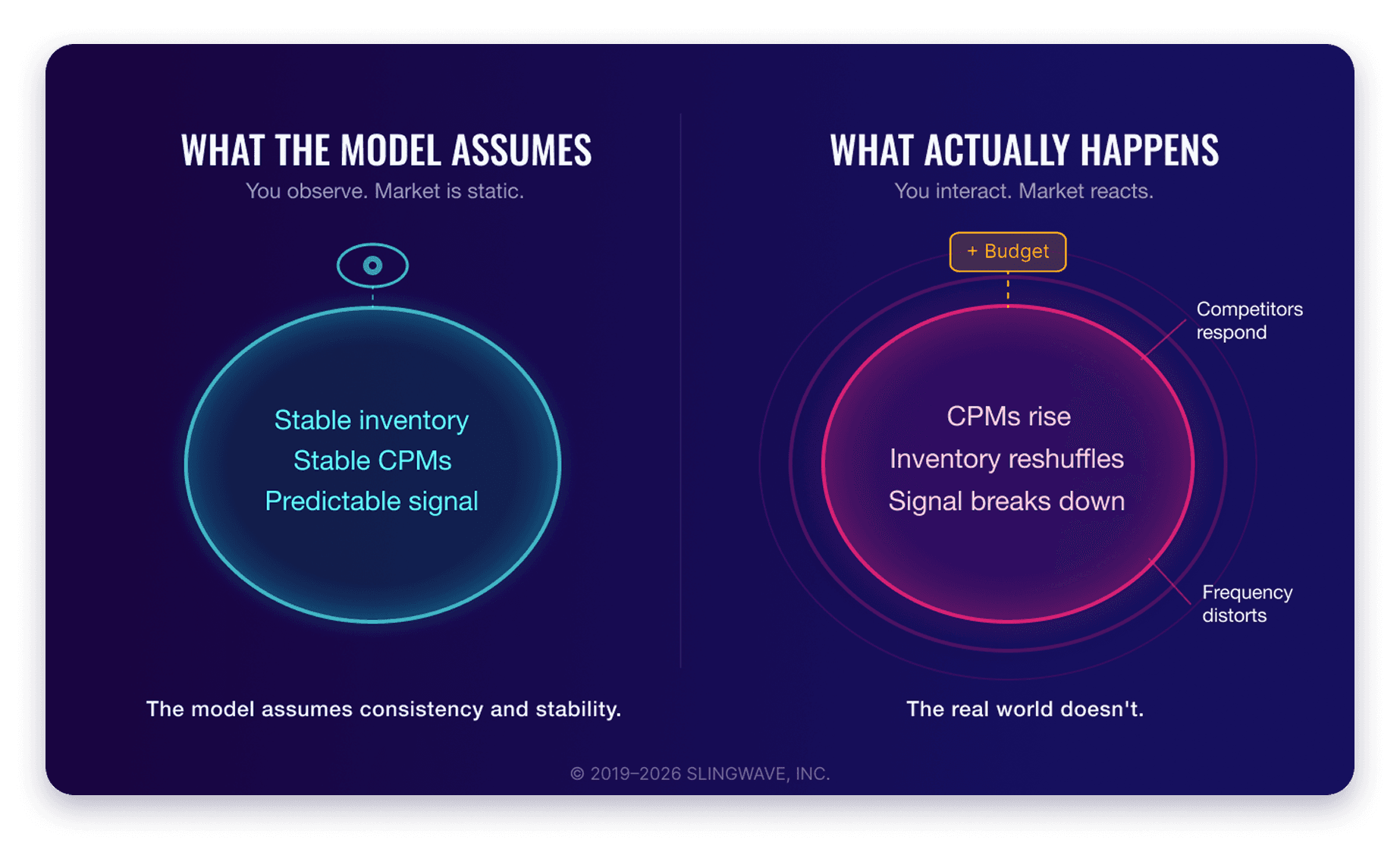

There’s a deeper assumption underneath all of this: that the market is static.

Most MMMs are built on historical relationships that implicitly assume stability. If you spend more in a channel, you move along a predictable response curve. Returns diminish, but in a smooth, knowable way.

But marketing doesn’t operate in a vacuum.

If you pour money into a channel, CPMs rise. Competitors respond. Inventory gets reshuffled. The very conditions that produced the original signal start to change.

The model assumes you’re observing the system.

In reality, you’re interacting with it.

And once you interact with it, the rules change.

The Recency Problem

Time makes this worse.

The cadence of most MMMs - quarterly, sometimes annual - made sense in a slower media environment. But digital channels don’t sit still. Algorithms change. Policies shift. Audiences migrate. Entire platforms rise and fall in a fraction of the time it takes to refresh a model.

By the time a recommendation is implemented, the world it was based on may already be gone.

In trading, a stale signal is a losing signal.

Marketing is learning the same lesson, just more slowly.

Competition: The Variable Models Ignore

Then there’s competition-the variable most models quietly sidestep.

In trading, you’re always aware there’s someone on the other side. Your outcome depends on what they do.

In marketing, the equivalent is obvious but often ignored: your performance depends on what else is in-market.

Itsn’t just about your bids. It’s about who you’re bidding against.

Strip competition out of the model, and you’re not simplifying reality - you’re distorting it.

None of this means MMM is useless. Far from it.

It just means we’ve been asking it to do something it was never designed to do.

We’ve taken a tool built for explaining the past and used it as a guide for navigating a dynamic, competitive, real-time system. Then we’re surprised when the translation breaks.

The gap isn’t a rounding error. It’s structural.

Bringing the Real World Back Into the Model

There’s a moment in trading interviews where candidates are asked a deceptively simple question:

“You have a model that works in backtests. You deploy it and it starts losing money. What did you forget?”

The expected answer isn’t about math.

It’s about reality.

You forgot that markets respond. You forgot that execution matters. You forgot that the system you modeled isn’t the system you’re operating in.

Marketing is arriving at that same realization.

The industry is full of models that look right because they describe a world that already happened. But the moment you act on them, you’re in a different world-one where media is delivered imperfectly, competitors react, and outcomes are shaped as much by execution as by strategy.

The next evolution in measurement won’t come from squeezing more precision out of historical data.

It will come from bringing the real world into the model-treating execution, competition, and market dynamics not as externalities, but as core inputs.

Because until then, we’re not modeling marketing.

We’re modeling a version of it that only exists after the fact.

The question isn't whether your model is good. It's whether your model is built for the world you're actually operating in. If you're not sure, that's worth a conversation. Let's talk.

Paul Boruta

Founder & CEO